Sunday, December 31, 2006

My Christmas present: clothing

Clothes are things I lump in the same category as grades and money; I wish they didn't matter, grudgingly acknowledge they do matter to many people, and set things up so that I can ignore them as soon as possible. For instance, I get only shirts and pants that match nearly all my other shirts and pants so that when I perform my complicated selection procedure of "pick the shirt at the top of the pile when you get up in the morning," chances are that I won't look too bad. All jeans go with all t-shirts, so this is easy.

Mom's making the compelling argument that if I go suit-shopping with her now, it saves me money (the suit and associated wardrobe will be a graduation-birthday-Christmas present, although I'd much rather have a new laptop, a dremel, or another two years of webhosting)

and time (because I won't have to buy a new suit for many years, on account of I'm no longer growing... and because she'll stop nagging me about it). Since a professional wardrobe is one of those things that I'm going to have to do eventually and might as well get over with now, and since it will make my mother happy (and probably my dad's parents, too), I said okay.

So I get to go clothes shopping for a whole new wardrobe this winter break. Yay. I'd much rather be working on math instead...

Friday, December 29, 2006

Constraints

Design is a fun way to explore constraints, as it is (in part) the art of finding the correct constraints to gently bound an elegant solution to a problem. Bootstrap started as a series of projects between Eric Munsing and myself experimenting with how far we could push the "time" constraint. Can you go from problem area to polished product design in 60 hours while still getting sleep and homework done? Interface design and education taught me how to design with constraints on knowledge; how do you make something that "teaches" users as they go? Appropriate technology and sustainable design inspire you with constraints on resources. Using only natural materials, using only $25, using tools in your garage, what can you build?

The nice (and dangerous) thing about setting your own constraints is that you can also decide when to relax them. If I'm working in a two-color palette and I know it would be so much better if I could just have three shades, then I can decide whether to relent or force myself to see what I can do with two anyhow. I can excuse myself for not performing perfectly because I could only use two shades, or take 15 minutes, or only lines from Shakespearean plays. I can stop fretting about whether to use blue or purple because I can only work in black and white. I can stop fussing and get down to business.

The ability to design our own constraints is one of the skills I feel is most lacking in education, where so many rules are set for us when we're young. I struggled in my first design classes in college because I hadn't learned how to create my own certainty in the midst of uncertainty. I struggled in my first independent studies and self-managed projects because I was so caught up celebrating the lack of someone else's structure to create my own to replace it.

As children, constraints are largely set for us; 5-paragraph essays, 30 minutes to do a timed test, be home before 7. How far can you go within those bounds? What are the reasons behind them, and can they be challenged? One of the major milestones of childhood is when the kid first realizes that adults are fallible, and their decisions are as well. Another major milestone is when the kid learns how to challenge the decisions of an authority in a respectful and mature manner ("I don't WANT to go to bed BECAUSE!" doesn't count). And then there's the process of growing up, where the young adult is expected to start making more and more of their own decisions and setting their own constraints (in an ideal world; some children are pushed into this far too soon, others are only allowed to do it too late).

How we deal with this freedom defines who we are. Who are you when nobody is watching over your shoulder? Do you keep the same restrictions that your parents or teachers set for no apparent reason ("the 5-paragraph is the right way to write, so I'll use it")? Do you find your own reasons for the rules handed down to you ("I'm cranky if I don't sleep before midnight")? Do you reshape them to fit your own whims ("Mum used to only let me eat one candy a day, but 25 a meal is a more reasonable limit.")? Do you push the boundaries to discover your own ("my parents raised me Lutheran, but I'm studying Buddhism because...")?

I've never tried to use the Mayan instead of the Gregorian calendar to keep track of my appointments simply because I think many other things are more important. I haven't questioned the design of arcane bits of C syntax I've been forced to type because getting my project to work was higher priority at that moment. But I do succumb to the "you must graduate from college" maxim partly because I'm afraid of what it means to look inside and challenge it. Which constraints do you consciously choose to keep unquestioned and why?

If you have control over the walls that hem you, you have control over deciding where you want to go and who you want to be. Constraints don't enslave us; they make us masters of ourselves.

Note: This post has plenty of rough spots and could use a rewrite, but my constraint was that I had to post this and be in bed by 1:30am. So here you go, in all its flawed glory.

Back in Chicago.

While wandering NY, NJ, and MA visiting family, I fell horribly behind in all sorts of things. Catching up with that stuff now. Instead of checking email, I got to see my new twin cousins (Julian and Luke, a month old and we weren't allowed to touch them without rubbing our hands with alcohol every time), eat the best cheesecake ever (Junior's), and learn that three-year-olds have a disturbing propensity towards potty humor.

Joe and I booked our plane tickets to Japan last night. The two of us and Gui are going there at the very end of January to present our designs to roboticists and businesspeople looking for new product ideas. Our hands were shaking as we booked the tickets (more a function of being broke than nervous, though).

Now to catch up on all that work.

Monday, December 25, 2006

A web design newbie's reading survey

So where to start? Well, for inspiration, I've been flipping through the sites and layouts on csszengarden and misspato (the clean section - most of the rest, while good-looking, is too flashy to suit my style). When I get back to Chicago, I need to check out Web Design In A Nutshell and read through the remaining chapters that didn't get hit during Thanksgiving break.

Since I learned HTML back in high school, it seems that the "slice n' dice images into tables to make everything pretty" has been replaced by a focus on standards and separation of content and presentation, which is just fine by me; if there's a default semantic order to things, I don't have to make up new relationships for every project I set out on.

Tonight's reading was working through the "primer" articles in A List Apart, a web design blog with some well-written articles far beyond my current level of sophistication. Specifically, I'm reading through all the posts referenced in the ALA Primer and the ALA Primer, Part II. I'm calling out a few gems more buried in these posts:

- 10 styles of webdesign - nice job of calling out various visual styles. I've noticed that a good number of computer geeks favor the HTMinimaLism style with a smattering of SuperTiny SimCity - my favorite is a balance between HTMinimaLism and Hand Drawn Analog.

- Speaking of visual styles: my mother calls mine "colorblind," which is why I'm grateful for the suggestion to pick your color palette from a favorite photograph, as I can take nicer photographs than I can create color schemes.

- A surprising interlude about what design is and isn't. Design "necessarily involves solving problems" and "satisfying the requirements of a user," which is "what distinguishes it from art or self-expression." Replace some of the web terms with engineering ones, and you could peg it as a Ben Linder lecture.

- What is the difference between a hyphen, a minus, an em-dash, and an en-dash? Note to self: take typography. Actually, make that graphic design classes... I'd still love to get at least a certificate someday.

- What about fonts? A study suggests that sans serif in readable column sizes (~11 words per row of text) is the way to go. Here's a list of beautiful typefaces. I've also been partial to bitstream vera sans ever since Brian Shih suggested it on his blog.

- I'll have to come back to glish.com, which has links to css tutorials and samples of nice layouts, including a 3-column with a fluid middle column. There is also a walkthrough of a css recreation of a table-heavy website.

- Instead of using tables for markups, how about lists and css instead? This is one of those "why it's great to separate style from content" demonstrations. It also means you can use son of suckerfish dropdowns (original suckerfish here) and then sliding doors to make the css more than pretty colored text.

- Then we turn those prettified lists into css rollovers with no preloads, or for the more graphically inclined, an image slicing replacement.

- I liked this article on the three-column layout not just for the column layout, but as an example of how to use css to drag semantically marked blocks of text into place.

- You can give each little content-block its own background and create visually separated columns as in layoutgala's example gallery, or keep the content block backgrounds transparent and use a background image to fake the "column" look.

- Speaking of background images... they go over the background color, meaning that you can create a background image for the top (or side or bottom) in one color to give a semantic content block a little colored heading (from the article on mountaintop corners). I always wondered how they did that.

- Make layouts resize gracefully with elastic design (now with concertina padding!) I like the line numbers on code snippets in the second link.

- In addition to ordered and unordered lists, there are also definition lists. New tag for me.

With that bit of reading under my belt, it's time to put some things in practice, so somewhere in the next few "hurrah web design" ramblings here will hopefully be sketches of designs I'm thinking of.

The winter break to-do list

- Start learning web design in earnest. In the spirit of learning by doing, I'm going to be working both on my website and on Bootstrap's, the itinerant and currently ill-defined design group that's spontaneously struggling into selfhood among a group of Oliners.

- Set old ghosts to rest - there are a few things, academic and nonacademic, that have been nagging me for months. An essay I never revised. An email I didn't reply to. It's time to clear these from my conscience.

- Echo: Make a professional-looking presentation package for the Japan Design Foundation Robotics competition that Bootstrap is traveling to in January. It's a design competition, but we came up with a robotics application, were shocked when we became finalists and people started asking us where they could buy the things, and are now trying to figure out the technology to implement it while getting provisional patents filed and everything; I'm mentally treating it as a tech startup because that's what it might become.

- SCOPE magic. It's going to be a hectic next semester, and any sort of head start is very, very good. I haven't been able to convince my cousins that the PIC and Blackfin boxes I brought home are computers - they don't fit into their mental model of what a computer should be.

- Solidify next year's journey. I need a coherent writeup and the beginnings of plans for my trek across the globe in the name of engineering education.

- The mathiness! As Jon pointed out, I've got some research to catch up on over break...

Edit: Whoa, almost left the math out. Thanks, Jon. I wonder if I'll have enough time to learn dynamics and controls too, so I can hound Gui and Mark Penner incessantly with questions next term...

Edit: Man, this betting pool is old. Note that Mark Hoemmen has already lost his bet, as he's married. All others are still up in the air.

Sunday, December 24, 2006

ConnVex

Ash was one of the refs; I was the... "field reset attendant," which means I got to scurry around the field stacking neon yellow softballs between rounds. Andy was the photographer, and Matt Roy handed out crystals (and fielded a heck of a lot of questions from people coming up to his table).

If you ignored the continuously pounding rock music, it was a lot of fun, and we got to see a good number of inspired robot designs - things like using zip ties as "fingers" to rake in the balls or putting two small pegs on the bottom of a "ball shovel" that the robots later used as hooks to pull itself up onto the bar with. The kids seemed genuinely thrilled to be there, and screams and shouts erupted during the bouts as loud as any I've heard at a high school basketball or football game (the few I've been to, anyhow). Gives me hope for the future generation of engineers - the best folks at a job are the ones who think it's a ton of fun.

It was a little weird to be on the other side of an event with kids involved. When the adults gave their requisite lecture on safety and sportsmanship, I fought the urge to roll my eyes; I still felt in part like a kid such diatribes are usually directed towards. At the same time, I understand why the adults have to say such things. It's just that a half-hour "Don't Do Drugs!" skit, a little one-page worksheet on racism, or a 45-second lecture on sportsmanship will just skid right off our consciousness unless a deeper framework has already been laid.

Tuesday, December 19, 2006

Posts from last year

Re: inability to ask for help

[Talking about the Olin-as-deep-end-of-pool analogy] If I sink, I will sit at the bottom and slowly drown as I stare at the sunlight filtering through the water. I won't ever let anyone save me. Some strange little part of my brain still thinks that if I let myself be saved, then I will not be able to save anybody else, when in fact it should be the other way around.

If I let myself be saved, that makes me fallible. Fallible people can't swoop down all godlike and help others. They might fall apart themselves. (from here)

Re: Eye.size > stomach.size syndrome

Behold a small dipper from the continuous stream of sage professorial advice which I'll forever remain grateful for. Man, we're lucky to have great teachers here.

Gill Pratt: "Life is the art of knowing which things you can let slide." (from here)

Re: Being simultaneously arrogant and underconfident

I hate being smart, but I like being smart. I hate that other people think that I'm smart (I'm not). At the same time, I like that they do. (I'm not.) I like looking smart. (I'm not.) I want to think that I'm smart, and sometimes I do. (I'm not.) And I hate that I like to look smart (because I'm not).

Hubris, anyone? (from the same post as the first quote)

I wrote this stuff when I was 19. I'm 20 now. I wonder if I'll still be able to call these things true when I'm 25. A small voice in the back of my head keeps saying it's narcissitic to quote yourself like this, so I'll stop now, reply to the comment backlogs, and then actually get back to "real work."

Thinkers and doers and the OLPC

Mitra: ...it's functional literacy... It's already happened in cable TV in India... The guys who set up the meters, splice the coaxial cables, make the connection to the house, etc., are very similar to these kids. They don't know what they're doing. They only know that if you do these things, you'll get the cable channel. And they've managed to [install] 60 million cable connections so far.

Functional literacy is a great start, but it is only a start. It's great to have functional literacy in many fields, but you need to be able to take at least a few into mastery. As Raymond explained to me a few days ago, it's not enough to acquire skills and take the information that's fed to you; if you want to do more, you've got to continually ask So What? and Why? and figure out how things work instead of memorizing the steps it takes to make them happen. Be a thinker, not just a doer. (Of course, thinkers must also be doers in order to get their thoughts into fruition.)

More on thinking vs doing here.

There's a bad truism among certain activists that education is the key. The key to what? Like all truisms the idea is incomplete. Decades of valuing education over action have left social movements educated and impotent. Thinking about power is more important than thinking about education -- spreading information is only important if people will do something with that information. We've figured out how to spread the information, but people aren't doing anything with it.

To this I would argue that education is lots more than just the spread of information; it include thinking about power and learning how to use it. The trouble is that this kind of thinking - doing-something thinking - is the type that you can't explicitly teach. It's part of what I'm aiming to say tomorrow during my Expo presentation on good textbooks. A good textbook (or a teacher or class, for that matter) isn't simply a way of conveying content from one mind to another. It's a way of creating (or recreating) an experience that transforms the learner's way of thinking. By definition, you can't come up with a standard way to make that happen - the point is nonstandardization, the point is figuring it out for yourself, and that's what makes teaching so tough (and so rewarding).

I found the above quote by following a comment link from a blog post on nonlinear learning that points out that knowledge tools (specifically, the OLPC) are not enough if students aren't taught how to use them. Several comments down the line, someone essentially says "OLPC is about hardware, not about how to use the hardware... other people need to pick up the educational aspect of things." It seems like we need people interested in education and fluent in teaching that can travel and immerse themselves in different school systems while understanding the technology behind the laptop well enough to see how it could be best implemented in various places.

Well, then. I may have found something productive to do with my global wanderings investigating engineering education next year. I'm going to need to do more work and research on this, but I wonder if it's an idea worth bringing up to the OLPC people at some point - and when and how (and to whom). I've been lurking on the project mailing lists since they existed, but have never actually spoken.

PS - David pointed out that I have a huge comments backlog - sorry about that, everyone! I'm not used to moderating these things (actually, I'm just going to turn moderation off and leave the captcha on; trying to cut down on blog spam here). Now to write replies to everyone!

Sunday, December 17, 2006

Other people's expectations

I've had an especially interesting series of discussions with professors over the last few months around this topic as it relates to learning (and grading). This thread of conversation has been going on ever since a conversation with Gill (badly paraphrased) about ECS, way back when I was actually complaining about it in what was probably my sophomore year. I was griping about how ECS didn't have any metrics (yes, the dreaded m-word; I also questioned this!) which meant you never knew what you were supposed to do. "Well," Gill explained, "we want you to stumble around and decide what you want to learn, and find out how to learn it." "But it's so inefficient!" I said. "Exactly."

Then this semester, I was talking to Ozgur about the vagueness of his comments during our design project reviews. "It's not enough feedback," I said. "We don't know what we did that was good or bad." "In the real world," Ozgur replied, "people might not tell you that. We give you lots of feedback in your earlier design classes*, and I wanted you to see how you would do without it. You need to decide for yourselves if something is good or bad." Adjust to feedback, but don't depend on it; it might not come. It's a variant of waiting for someone else to tell you what to do.

*which is open to debate, but I've found that folks in general are usually good about giving you really good, detailed critiques if you ask them in person later... I don't do this as often as I should.

If you're a teacher, how can you strike the balance between having your students do what you think is good and having them do what they think is good? Is it possible to imprint your own values too strongly onto them and prevent them from becoming their own person?

If you're a student, how can you strike the balance between doing what you think is good and doing what other people think is good? How can you tell whether what you want is what you want, or what you want because other people want you to do it?

Thursday, December 14, 2006

Craziness: the antidote to boredom

From Chemmybear's page comes an excellent example of the kind of ubiquitous curiosity that makes a good hacker. It's a passage written by Ira Remson in a book by Bassam Shakhashiri.

While reading a textbook of chemistry I came upon the statement, "nitric acid acts upon copper." I was getting tired of reading such absurd stuff and I was determined to see what this meant...

In the interest of knowledge I was even willing to sacrifice one of the few copper cents then in my possission. I put one of them on the table, opened the bottle marked nitric acid, poured some of the liquid on the copper and prepared to make an observation. But what was this wonderful thing which I beheld? The cent was already changed and it was no small change either. A green-blue liquid foamed and fumed over the cent and over the table. The air in the neighborhood of the performance became colored dark red. A great colored cloud arose. This was disagreeable and suffocating. How should I stop this?

I tried to get rid of the objectionable mess by picking it up and throwing it out of the window. I learned another fact. Nitric acid not only acts upon copper, but it acts upon fingers. The pain led to another unpremeditated experiment. I drew my fingers across my trousers and another fact was discovered. Nitric acid acts upon trousers. Taking everything into consideration, that was the most impressive experiment and relatively probably the most costly experiment I have ever performed...

That reminds me of this diagram from the infamous Ghetto Indoor Pool Caper.

Crazy things always make better stories later on, regardless of whether they work or not. They also teach you more. The trick is really a two-step process:

- Do more crazy things

- Tell the stories about them so you remember what you learned

Wise fools

In other news, Chandra, Eric Munsing, and Jon Tse have all confirmed (over pizza) the existence of the following behavior pattern.

The Wise Fool Phenomenon: The probability that you will ask unashamed intelligent questions about a topic is inversely proportional to the amount you believe you are supposed to know about that topic.

For instance, I'm much more likely to ask about mechanical engineering topics unashamedly, without fear of "looking stupid," than I am about electrical engineering ones. Ironically, this means I learn about mechanical engineering more rapidly when I decide to learn something about it. I recognize this is a stupid thing to do, and I'm trying to change it. It's one of the (many) reasons I don't do well in classes.

The best explanation Chandra and I could come up for this is that you think you're already "supposed to have learned it," and that you are therefore being stupid (and will appear as such) and wasting everyone's time by having them explain things you should already know. If you aren't "supposed" to know it, your questioning (for some reason) provides amusement/insight/warm fuzzy feelings for the people you're asking questions of... and besides they can always say no, because you don't "have to know it" anyway. When it seems like you should already have something in your cup, it's very hard to empty it.

Corollary: You are more likely to exceed expectations when you don't know what they are. This assumes you've got an initial interest/aptitude in the subject and are pursuing it. If you're told to go to height H, you tend to go to H (or a bit higher if you want to look especially impressive), and then stop because you've "succeeded." If you can't make it to H, you "fail." On the other hand, If you aren't told a set height to reach, you just climb, and as long as you like it, you just... keep going.

There is a certain height at which it's reassuring to have someone look up and exclaim in amazement at how far you've gone, but usually by this point you're well past any H they would ever have set. If you don't make it far, that's okay; you haven't "failed," just chosen not to continue. Besides, at some point, the folks who advance any field must tread places nobody has ever gone before; why not start the process of trusting your own learning earlier?

There's a flip side to both of these as well. An initial goal or requirement can help you discover that something's there to learn, and give you a starting point at which to look. Lack of useful metrics makes it more difficult to reflect on your own learning. One reasonable balance is the idea of minimum and maximum deliverables (as Allen Downey calls them; Rob Martello calls them "circles") where you set an easily-achievable bar as an absolute goal but also toss out, as a tentative target, the bluest-sky dream you could ever imagine reaching. That way you get something done, but have freedom to do more (and a vague starting point to head towards if you haven't found something more agreeable by that time).

But that's getting off track. As far as I can tell, you can take advantage of Wise Fool Phenomenon by:

- Learning things "before you're supposed to know them" (the reason I used to do ridiculously well in math classes; I'd already read books and asked lots of stupid questions about the stuff before we got to it in class).

- Beginning a learning endeavor by making the big disclaimer that you know nothing and will be asking lots of stupid questions.

- Actually asking lots of "stupid questions."

It's the last one that gets me. I don't feel like I've got the right to ask "stupid questions" unless I've been working hard and doing my utmost to keep up - I feel like I've got to go as far as possible by myself before calling for help. This works really well in the cases where I actually take the time to go as far as possible by myself before calling for help.

Unfortunately, "as far as possible by myself" is pretty far, so I usually don't get there. This means I often consider myself to not have "gone far enough." When I fall behind in classes this leads to "but I can't ask for help because I haven't worked hard enough" syndrome, which leads to me putting off talking to my prof until I've caught up on my own to "prove myself worthy," but I can't catch up right away because I'm already behind, which leads to me putting off asking for help even more...

Lynn says I need to be less afraid of wasting people's time, and the very fact that I'm afraid of it means that I typically don't do it. To that, I'd add that being afraid of wasting their time in the beginning can also lead to wasting more of their time at the end when I mess up because I didn't ask first, so ignoring the Wise Fool mandate is just a really stupid thing for me to do.

This has been Exhibit A in the "Why Mel Isn't Ready For Grad School Yet" series.

Wednesday, December 13, 2006

Call for procedure for attaining maturity

First: I am going to grad school someday. Even with the inherent unpredictability of the future, this is one of the events that has the highest probability of happening at some point in my life (pretty much the only other thing with higher certainty is the item "Mel dies.") This will probably be in engineering, and I want to become a professor someday. I will probably also at some point work in industry in some capacity, but as a way of gaining a better perspective for what I should be doing in academia. End statistical disclaimers here.

However, over the last year and a half or so I have been steadily realizing that now is not the time for me to go there. I'm not academically mature enough to be a graduate student; although intellectually I believe I can handle the material (I've been devouring research papers and graduate textbooks for fun for over 3 years with no trouble), I don't have the ability to focus on a research topic (or even know what I want to focus on!), work constructively in a lab for a long period of time, or manage my time on independent projects. I need to learn how to handle responsibility, and I need a broader perspective; in short, I'm not going to be ready to go to grad school by the end of May. (Nor am I sure that I am mature enough to go immediately in to industry. I'm pretty much not ready for "the real world.")

I could probably fake it. I've been fortunate to have access to great classes and teachers, awesome libraries and information sources, and a brain that's quick enough on the uptake to fudge my way through things without developing much intellectual maturity. (Y'know, study skills, on-timeness, scheduling, actually preparing for things ahead of time instead of wandering to the whiteboard without a clue of what the lecture's been on...) I think a lot of Olin and IMSA kids did this through middle and/or high school; I've also been doing it through Olin and have so far been passing classes and all that other stuff because I can improvise and am shameless enough to do so.

However, without developing self-discipline and maturity, I'm not going to do anything close to what I could do if I was able to responsibly manage my time. This phrase is too common: "man, Mel can do all these things when she's distracted... imagine what would happen if she focused!" I want to learn how to focus because I do want to find out what happens. I don't want to waste any potential I could have to do good. (I don't think I have that much potential, or no more than anyone else - but what I do have, I want to use right.)

I'll be taking a "year off" right after graduation to travel, volunteer, and work on an independent project in engineering education that I really should describe here at some point. I've spent the last 9 years cramming content into my little skull at top speed, and this is the first real chance I've gotten to step back and take a breather, really reflect. I've got time and space to grow, and the willingness to do it.

What do you think are the most important things an incoming grad student (or adult in general) should know or have, and how did you obtain them? I'm not too worried about learning specific types of content, although if you have a favorite textbook or want to say "Learn Linux and LinAlg! It's EVERYWHERE!" that's cool too. I'm really worried about... well, maturity. Responsibility. Being an adult that can handle those things instead of an arrogant cocky punk kid who pretends to try, but in reality thinks that everything is a fun game. (This is my attitude towards the world; it's a lot of fun, but it leads to me blowing stuff off that should not be trivialized, and that needs to change.)

I realize that this is something that will happen anyway; maturity comes with experience, which comes with time. I'd like to hear your thoughts, thoug h, O People who art Far More Wise than I.

Monday, December 11, 2006

The original competencies paper

There are great Olin documents all over the place that provide insights into the original design of... well, just about everything - and I feel there should be an archive, a repository, of all these important things. I'm sure they're kept somewhere, but I don't know where.

I'm going to talk to Ann Schaffner about this. If no such thing exists, it will soon.

The Olin Curriculum: Thinking Towards The Future

It's amusing to see some hints of the future - for instance, the conclusion suggests the possibility of expansion into the biology realm - and some artifacts from the past, such as sophomore integrated course blocks. It is amazing how much a school can change in less than 2 years.

Another section outlines Olin's curricular objectives and goals. Here's my take on how well we're accomplishing them (Miks, I'm procrastinating on my IS deliverable here and still owe you a good post). The standard disclaimer applies: this is in no way representative of anything Olin-official, and is based entirely off my own biased views and experiences.

- The curriculum should motivate students and help them to cultivate a lifelong love of learning. I think that we generally attempt to provide this in the execution of classes, but the structure within which those classes are placed (that is, the overarching curriculum) could be better designed to promote lifelong learning and love thereof. Yes, it's very possible for students to pursue their passions if they push hard enough, but that's true of any place; with its many independent studies, cocurriculars, and passionate pursuits, Olin is a much easier place to do this than most. But we can do better. In particular, it is difficult to cultivate a lifelong love of learning when you're trying to overload knowledge into your brain at such high velocity that it is no longer enjoyable.

- The curriculum should include design throughout, from the day students arrive on campus to the day they graduate. Day students arrive on campus: Candidates' Weekend, check. Day they graduate: SCOPE projects, check. Well, close enough. Olin has an amazing design component for an engineering school. Olin has an amazing design component for any school, design schools included. I'm not talking about the studio art skills (and if you say we don't have any you haven't taken Prof. Donis-Keller's classes), but the teaching of what it means to think like a designer. I do think that our design foundations, namely Design Nature and UOCD, could use revision; they're arriving at the point where I'm afraid that if we run them the same way another year or two, they're going to become habits.

- The culmination of the curriculum should be a senior capstone that is authentic, ambitious, and representative of professional practice. SCOPE, check. Ambitious, yes. Authentic and representative of professional practice? Closer than pretty much anything other than a co-op could be. We've got our own budget, office space, minimal guidance, and a problem. However, we're still very much "not real-world workers" in that we've still got classes and finals and can't work on the project anywhere near full-time.

- Students should gain experience working as an individual, as a member of a team, and as a leader of a team. Everyone will get lots of experience with the first two, although individual work is less often project-based, which I can see leading to problems later in life when I have to build things all by myself. I've been lucky and gotten a chance to do this, but not everyone gets to have experience working as a leader of a team. This is compounded by the twin facts that (1) teams usually want to do well and (2) the first time someone leads something they'll be quite uncomfortable and mess up a lot. I'm not sure how to get over this. Perhaps learning leadership should be built more explicitly into the curriculum, and people who normally don't take leadership roles should be given more low-committment, short-term, low-stakes chances to try it out and encouraged to do so.

- Students should learn to communicate logically and persuasively in spoken, written, numerical, and visual forms. Some Olin students are very good at this, some are not. I would love to see a higher standard required of all Olin students in this regard, but recognize there probably isn't time to cram more of this into our already packed learning schedules.

- The curriculum should include space for a true international/intercultural immersion experience. Study Away, check. I would love to travel with a professor or two, or a group of Olin students on a semi-academic excursion. (By the way, I'll be travelling a lot for the year after graduation; let me know if you'd like to join me in any leg of the journey. More details to come.)

- The goal is to graduate self-sufficient, motivated individuals able to articulate and activate a vision and bring it to fruition. An education that prepares students only to turn problem statements into proposed solutions is inadequate; education must also prepare students to recognize problems and to convince others to adopt solutions.

Sunday, December 10, 2006

Artist's statements

Self portrait: But it's shiny over there

All right. Mel, you like books, you like studying, so you're just going to sit down and read, and I'm going to draw you. There we go... and just hold that.

Hey, you gotta stay still. How am I supposed to draw when you keep moving? See what happened there? I'm going to go back and fix it, but I need you to stay still. Just focus, all right? That's good. That's a good girl. I know there's a lot of interesting things out there, but you can't do 'em all at once. You know that, right? Right. Good.

What's that? Wait, no - don't - hey, what are you doing? Don't stand up! Just - sit down, get back here! I'm not done yet! You're not done yet!

Habitat: BISY BACKSON (from this)

They said you might be looking for me.

I'm not usually anywhere in particular. Most mornings I grab my keys off the wall and run; when I get tired I come back to my room to collapse, and that's a day. I couldn't tell you in advance where I'll be in between. As long as I've got access to a flow of information, I'm plugged into the world, and it's good.

I'm a wanderer. It's when I'm not home that I feel most at home; it's when I'm in someone else's place that I feel I'm filling mine. My room is where I hang my keys and nothing more. It's not a place you'll find me if you look.

Thursday, December 07, 2006

Amusing fact collection

- Noise colors actually match up (roughly) with color-colors! If you played white light as music, it would sound like white noise (evenly distributed throughout all frequencies). Same with blue noise (high frequencies), pink noise (low frequencies), and... well, the analogy mostly falls apart with say, brown noise, but this just didn't cross my mind before; I'd assumed they were arbitrary names but apparently the actual visual colors are what they were named for.

- Wavelets are cute. They're little peeping things that pop up - PIP! and then decay out real fast - POP! - they're tiny little waves that you can string together to make bigger, more complicated waveforms (yes, it's another one of those orthogonal basis things like the Fourier and Laplace transforms), and in my head all these tiny wavelets start pipping and popping in and out like popcorn - pippippippoppoppippoppippippitypip! So cool.

- I ran across this in the book Noise and can't find another citation of this, but apparently "bell curve" doesn't always refer to the normal distribution. There's other kinds, like Rayleigh and Rice, and - hey, we didn't learn this stuff in ProbStat! This plus reading educational research papers where they're talking about crazy statistical manipulation of student data make me wish I could learn advanced statistics someday. (I'm aware there's an independent study going on next term, and I should probably talk to those people about getting their book list after graduation.)

Sunday, December 03, 2006

Hackstar 2.0 : not just white males?

I don't usually go for the "rah rah rah gender" stuff, but this was interesting. I came across this snippet today while I was in the middle of researching for my DED paper (on technologies for distributed communication). Is the new era of collaborative technology on the internet repeating the same old cycle of empowerment based on some gender or cultural bias or difference?

It is no accident that the example innovators here [both old-school radio technology and the new web 2.0 startup rush] are all educated white boys (not girls) from middle-class or better backgrounds. There’s nothing wrong with being excited about the possibilities of new technologies, but it is important to see that new media don’t allow “anyone” to make software and content.

It's interesting to note that this statement would appear to be backed up by, for instance, the population distribution of Y Combinator founders. Data's sketchy and anecdotal here with insanely small sample sizes, but still - in what is currently four rounds of funding for multiple companies, each with multiple founders, we still see lots of young... white... males. This isn't to say that I think the hackers there shouldn't have been; all the folks I know (8 Oliners and counting!) who have founded startups with these people are fantastic engineers, very, very good hackers, and great people. If I was the one betting on bright young startups, I wouldn't hesitate before giving these people lots and lots and lots of money.

However. Again, anecdotal evidence and n=1, but if Miks (a phenomenal engineer and roboticist - would that I had half her skill) gets this reception in a room full of startup geeks, what does it mean? (Statistically speaking, nothing.) When looking at hackstars, the question isn't "why these people?" They're at the top because they're good at what they do. The question is "why not these other ones?"

Is there something dissuading females and minorities from pursuing web startups (and in a broader picture, empowerment via the technology of the internet)? In this day and age, we'd like to think that it's not that we think these folks are less competent hackers, it's just that they don't... stand... out as much. (Why?) And the few of them who do are taken as relative rarities, exceptions who prove the rule. "Your position in the technical meritocracy is correlated with such an unusual identifier that I'm going to call attention to it in my identification of you."

I was going to write something here about my own experiences, but realized that was what I was trying to avoid. Instead, I'll list some possible boilerplate reasons for this phenomena.

- Females and minorities just aren't good at "this kind of stuff." This is the horribly politically incorrect viewpoint, and not a whole lot of folks will have it (or at least admit to it).

- They're not interested. Are they interested but can't find a way in? Are they disinterested because the world's set such high barriers and anti-expectations against them doing this that the activation energy becomes sufficiently high enough to dissuade folks that otherwise would have gone for it?

- This is an extension of the current math/science/tech imbalance. Fewer females and minorities (for instance) learning to code as a kid means there's fewer ready in their late teens to take the "next step" towards hackstardom.

- They tend not to pursue areas that they don't think they can change the world with. In a field dominated by people unlike you, making changes is tough. Also, how much good will web startup companies actually do? Maybe they're contributing in more productive areas than making shiny webpages. On the other hand, the internet is a tremendous tool with the potential to provide information access to lots of people who didn't have it before, and knowledge is power - couldn't this very easily be used to change the world, if you had the right goals at the outset?

- We've got too small a sample size and it's too early to tell. The small sample size appears to be indicative of a potential imbalance, though.

My thoughts have turned into incoherence and I should get back to that paper, so I'd like to throw this open for discussion. I'd especially like to hear the thoughts of those folks who have already gone the startup route on this. Do you think this is something we should be looking at, or is the playing field already as even as it gets and there's no need for worry?

Saturday, December 02, 2006

Seely Brown on passion-based learning

Schools can teach essential knowledge and critical thinking through somewhat traditional means. But they should complement that teaching with what Seely Brown called "passion-based learning" that focuses on getting students more engaged with topic experts.

In this new world, technology is essential because it provides every student with the means to experiment with building their own things. In the old-school apprenticeship model, the kid would enter the shop and (after sweeping the floor and such for a while) get their own hammer, paintbrush, or whatever it was to start playing with. You had reasonably easy access to the careers that were available to you; each town needed a smithy, you could count on an artist's guild in every major city, and so forth. Right, so your career options weren't terribly open ("Howdy, I'm Joe Peasant... I'd like to become a scholar" would have just gotten you clubbed upside the head) but learning was highly participatory; you didn't take Farming 101, you just... farmed.

Industrialization took that away for a while; how the heck do you give a kid their own factory to play with? When all the guns in the region are being mass-produced by a factory in a different state and your own town is the center of production for corn and only for corn, how do you gain exposure to a diverse set of careers? Technology allows us to give each kid simulations of many different complex systems (think SimCity and ZooTycoon) and access and exposure to experts from many different areas they might be interested in.

If you want something done, ask the busiest person you know.

The more you overcommit, the more that procrastination becomes intolerably expensive to engage ... yet it is when procrastination becomes exceedingly costly to do, it is then that extreme creativity emerges. In the impossible moment, miracles tend to happen. "Necessary procrastination" is a prime factor in the creative process. When the cost of procrastination increases, the probability for radical new thoughts to emerge increases as well.

If you want something particular done, asking the busiest person you know is no guarantee. But if you want something done, ask the busiest person you know. Something will happen - perhaps not what you originally intended, but something.

This may be the source behind my drive to overcommit, which I know is shared by plenty of other Oliners and IMSAns. Squeezing in heartfelt late night conversations between overdue problem sets makes them that much more costly, and therefore much more meaningful. Side projects smuggled in the back of lectures mean they've got to be worth your time enough to pursue despite the cost of missing information. It's a natural pressure cooker to inspire and winnow out the extraordinary.

Friday, December 01, 2006

Idea clusters and ice crystals

Anyway: interdisciplinary thinking rocks. I just read a paper titled "Data Crystallization Applied for Designing New Products" by Horie, Maeno, and Ohsawa that describes a data analysis tool that (among other things) algorithmically detects and graphically displays "meaning clusters" and connections between them in text. The on-screen graphics of ideas and their relationships look like little molecules bouncing around.

When they were talking about how they modified the display to make it easier to understand, they described how they were inspired by the "molecular" look to take on a "crystallization"metaphor. Idea clusters are like snow falling from the sky, with a few key words acting like "dust motes," nucleation points that cause other words to cluster around them. In order to make the clusters larger (and thus fewer in number and easier to understand), they decreased the "temperature," making it easier for the ideas to clump. Increasing "temperature" yields a more dynamic and complex picture of thoughts bouncing all over the place.

I realize this is mathematically, computationally, and chemically inaccurate - plenty gets lost in the cross-discipline bridging - but that the essence of ideas can make their way across boundaries like that is pretty awesome. Incidentally, the tool described in the paper just mentioned is great for picking out interesting connections between idea clusters thought to be previously unconnected.

Man, academia is cool.

Tuesday, November 28, 2006

Hackable == green

Readers of Make: blog have seen their maker's bill of rights, which I love. Worldchanging has a great article about how design for hackability is actually design for sustainability, since handy types can fix/upgrade their devices instead of tossing them when they get broken or obsolete. Sweet - I smell a change in the world.

Monday, November 27, 2006

Geek moment #0x3c52ab9

I wonder how you make a program that can automatically detect the bpm of a song (for syncing with slideshows, for instance)? Low-pass it and look for regular spikes at bass drum frequencies? There's always the option of having someone tap on the spacebar in rhythm to the first 30 seconds of a song, then averaging the time between taps.

Okay, now I'm just procrastinating.

Live the questions now

Don't search for the answers, which could not be given to you now, because you would not be able to live them. And the point is to live everything. Live the questions now. Perhaps then, someday far in the future, you will gradually, without even noticing it, live your way into the answer. --Ranier Maria Wilke

So the question is: how do you live a question? I suppose this quote is telling me that I shouldn't look for the answer to that one and let it hang unresolved, but that would be an answer, which is contradictory.

It's always been a disquieting yet quietly triumphant thing to me that humans are able to live with logical contradictions while knowing they're logical contradictions. Definitely wouldn't go so far as to say that Godel's incompleteness theorem explains what makes us human - it doesn't say anything of the sort - but contradictions are still an amusing and pervasive phenomena that I'm still trying to come to grips with. Does not compute... but it's kind of cool that it doesn't.

Sunday, November 26, 2006

You might be a future grad student if...

I mean really like. Last year my favorite readings (edging out even sci-fi stories) were the Robotics papers for Gill's class and things like Shannon's original paper on information theory. I stayed up nights earlier this semester reading Papert's stuff. Now I'm kicking myself for not doing theoretical research earlier. All the research stuff I've done at Olin has been "hey, a new lab! buy equipment, haul furniture, hello I am a code monkey." Haven't done much research, to be honest. And in high school I read very few papers, they were mostly biotech, and I just didn't get into that stuff as much (too many immunology acronyms to remember) and that was for fun, not for research of any sort.

I'm currently crawling my way through "Opportunistic beamforming using dumb antennas." It breaks my previous slow record for reading in English; 3 hours net reading and I'm 3 pages in (out of 19). The previous record holder was the Calculus of Variations textbook I crept through for my PDEs project two years ago, and that was 18 pages in 6 hours, three times as fast. It takes time for me to work through the math and look up new terms (did you know there's an imaginary error function? It's called erfi. I'm pronouncing it "er-fee," which makes it sound like the name of a puppy.)

It's a great balance, actually. Mathematics, but applied mathematics. Complex and abstract enough to be entertaining, but tied to reality so I actually care enough to keep going when the entertainment factor fades. A mix of probability and discrete math, with a good heap of graph theory thrown in - my favorites. Something where good programming skills helps, but it isn't all you do. Where hardware knowledge helps, but it isn't all you do. Where the boundaries between doing abstract mathy stuff, computational simulation, and actual physical implementation are paper-thin and crowded close together.

Did I mention the math? Ah, textual data - if it's mathematical, it's got to be written down, and so I can read it! In contrast to this, I'm still looking for a good textbook on how to lay out PCBs properly. It appears to be something people "pick up." (My PCBs are awful - I can use the software, but I really don't know what I'm doing with it aside from plugging the right things together. "They're all connectedlike!" "Holy cow Mel there are huge loops everywhere and what the heck did you do to the ground plane.")

I wish more electrical engineers would write good books about their work. I have a nagging feeling that I'll be trying to fill this gap as time goes on.

It's good that I'm forcing myself to take some time off to travel and work, otherwise I'd just stay in academia forever, and that wouldn't necessarily be good for my growth. Strength in diversity of experience, Mel. The paper you're reading now mathematically proves that (in a way).

Finish this section! Model Rayleigh fading! Sleep so you can get up when the sun rises and get more glorious natural light!

Saturday, November 25, 2006

Blinky lights

The Blinky Lights theory of electrical engineering: they make us happy and confuse the heck out of everyone else. How strange must it seem to an outsider to walk into a lab and see adults cheering at a tiny flickering red dot? "What's that?" "THE LAST THREE YEARS OF MY LIFE HAVE BEEN VALIDATED!" And then a slurry of acronyms that leaves the observer bewildered as to why they're getting so worked up at an effect a preschooler could have produced with their thumb on a flashlight button...

I'm exaggerating and stereotyping for comic effect, but that's the thing about software and electrical engineering; the things that look most difficult are often the easiest to accomplish, and vice versa. Get this peripheral button to send a keystroke to the computer? Agonizingly hard. At the end of it, what do you get? "Congratulations, you typed a B." But once you have that, it takes all of 5 minutes for someone else to modify your code to send the entire text of Galileo to the computer with the same keystroke. "Wow! Brecht in a button!"

Little blinking lights symbolize the completion of an often tricky technical solution. It's like telling someone "wave this flag when you get to the top of Everest." The flag is a low-resolution indicator of the journey. You wouldn't ask the climbers why they didn't just wave the flag in their backyard in the first place. That's not the point.

Flight of fancy: instead of hooking your system up to the blinky-LED, route the end "success!" signal to a magical black box, which does the following when it's triggered:

- Plays the opening of Handel's Messiah in loud, high-fidelity stereo, followed by upbeat disco music

- Beams a multitude of brightly colored spotlights around the noble engineer who's just finished the... whatever it was

- Cue robots, strategically positioned around the room, to pop out and burst into applause and loud praise

- Cause a large cake and several bottles of cider and champagne to descend from the ceiling

- Send a press release notifying the folks who print birth announcements that "module 21b8 of component 382c0x4 first demonstrated its functionality today," complete with (automatically snapped) photo of the module with its proud makers

Friday, November 24, 2006

Hair and sun

The lack of sunlight is bothering me this year more than I've remembered it doing in the past. I've always gotten peeved at a lack of long days, but now I actually grimace when I look out the windows at 5pm. Is this why people go to grad school in California? Anyways, darkness signals my brain to stop studying and start lazing around with books, which is bad during the end of term crunch. I have projects to finish, darn it. I want my sun.

Thursday, November 23, 2006

Back to IMSA

Walking through the hallways visiting teachers made me realize I was finally one of those crusty 20-year-old alumni that the 15-year-old kids look at with "who are you?" eyes. The last time I was back at IMSA, at least some of my younger IMSA friends had been there; now they're all graduated. None of the people at the couches (my old hangout between classes) knew me, nor did they know anyone who'd known me. Nobody was at the womb (another hangout nook under a staircase enclosed by a bench). I tried to sit in my old spot, but I'd grown a few inches and didn't fit quite as well any more.

Then I went through notesfiles and found out that while I've been chugging away at Olin, the folks I knew in high school have gone travelling, graduated, gotten jobs, gotten married, and then - baby pictures. That's a lot of people and things to keep track of - I wonder how older adults do it; they know so many more folks. I used to be amazed that my parents sent out hundreds of holiday letters every December; now I wonder how they keep the list down to so few.

Saturday, November 18, 2006

The babies! They are everywhere!

Also, I'm continuously surprised to find out that my shy little brother has somehow turned into a confident young man (really an adult - he's 18 on Tuesday) sometime in the almost-7 years I've been studying away from home. If I've got one regret about going away for school, it's that I didn't get to see him grow up. But I really like the person he's growing up into. I'm not-so-secretly hoping my grad school will be near his college so we can get to know each other again.

Happy birthday, kiddos. And a birthday shout-out to my cousin Mark (who goes to Babson) who passed the "I'm not a teenager any more!" mark this month as well. Mmm, milestones.

Random Acts of Engineering

"Hey! So, ah, Eric found this design competition..."

"Where are you?"

"We're in the library. I know you're in Illinois, but - are you by a computer?"

"I'm almost afraid to ask, but when's the deadline?"

"Ah.... midnight?" (It's 6:30pm.)

There goes my evening. The next 5.5 hours were spent happily chatting away on phones and laptops while simultaneously brainstorming, developing, and presenting 4 different product concepts for the (month-long, apparently) competition. Papers flew. Pixels flew. I completely bewildered my mother by asking her to pose next to an electrical outlet. And the final products were... not too bad, in my biased opinion - downright decent if you consider they were done with no planning on a randomly assembled distributed team in the span of a night, minus dinnertimes.

Random acts of engineering. That, plus the excellent potato-leek soup we had for lunch, just completely made my day.

Speaking of distributed teams, I just want to say I LOVE MY TEAM*. Phil and Hiroo from Scotland are gorgeous designers and absolutely wonderful to work with for our distributed engineering design course project, and Chandra was infinitely patient with everything and totally kickass at making our prototypes both structurally sound and manufacturable. Hopefully I made up for my ineptness over videoconferences (can't... read... lips...) by introducing shiny software; since it was a more mechanical-ish design challenge (coffee cup holders) I thought my computer skillz would go unused, but awareness of online collaboration tools was definitely helpful.

*originally intended to refer to the distributed team, but thinking about it right now I can honestly say I love every single team, group, and committee I'm on this semester - not just "like," "tolerate," or "uh... it's a valuable learning experience," I really love the people on them and the spirit in them, which is incredibly unusual... and boy, it feels good. I want this feeling more. The world is such a happy place when you have awesome teammates to work with.

It's 2am Eastern, so I'm getting an early snooze in an attempt to make up for sleep debt. G'night, folks.

Wednesday, November 15, 2006

Ooh, a memory dump.

- Go to Start > Run and type "debug" (no quotes).

- type "d f000:0" (no quotes, and those are zeroes).

- hey look, it's a memory hexdump!

- now type "u f000:0" (yep - no quotes).

- hey look, it's assembly!

- You can look at different chunks of memory by changing the numbers you type after the "d" or the "u."

- now type "?" (...right, no quotes) and hit enter. Muahahaha.

- q is for quit.

When you procrastinate on art homework by sniffing around Windows memory dumps, you know you really need a Thanksgiving break. I'm off to the studio.

Learning journals and peer commentary

Blue Sky The First: Engineering Project Notebooks That Don't Suck

In the book "On becoming an innovative university teacher" by John Cowan, he suggests keeping a learning journal, a practice inspired by a book by Jenny Moon. The idea is that you keep a learning journal for every topic you study - like class notes, but with more emphasis on personal reflection - and then if you teach students in the subject later, you pass on your journals so they can read yours while they're making theirs. Keeping a learning journal helps you keep track of your own educational goals, and serves as a record for the transformational thought processes you've gone through.

"Sweet," I thought when I first read that. "I'll do that!" A month later, I'd found that the learning journal concept works beautifully for art and writing, but not so great for engineering. Data on paper is beautiful but dead. You can't type it, compile it, run it, or analyze it mathematically without a lot of rote cranking. I'll be darned if I'm going to print out my code and neatly paste it inside one of those little lab notebooks just so I can point it out to my students years later.

Blogs are the closest things to learning journals I've found on computers, but if I go completely electronic , I lose the ability to quickly sketch diagrams or hash out math notes. Maybe other people can type LaTeX or LyX fast enough to keep up with a lecture - I definitely can't, and besides, I do math with plenty of diagrams. Besides, if I type LaTeX into a blog (assuming I can even figure out how to make that display, whether it's with MathML or something else) it still has no mathematical meaning; I have to re-code it all over again in Maple or Matlab to be able to maniuplate the numerical data.

Enter Matt Tesch, who provided a starting point - a proposal to combine handwriting recognition with LaTeX and a computational library. The idea is that if you have a tablet PC, and you scribble your math syntax in, it'll be recognized as two things simultaneously: (1) LaTeX syntax, so your chicken-scratch suddenly turns into beautifully typeset math, and (2) computational entities, so that you can finish scribbling an equation, highlight it, click "evaluate," and actually get an answer. Your handwriting will have both mathematical and typographical meaning, and it'd be a start in taking math notes this way, at least.

So the two of us are plugging away at that - Matt on the handwriting rec side (since it's his independent study this semester anyhow) and myself on the typography and the numerical computation backend (since it's a hobby of mine anyhow).

Ideas, suggestions, and things we've completely missed are very, very welcome. Is this idea completely useless? Are there things already out there that do what we're trying to do? Do you know of any projects or papers we should look into in the process of figuring out how to make this happen? And the questions I'd really like to find the answer to - do you keep an engineering project notebook? Do you like it? How could we design a program that would entice you to keep your project notebook on your computer instead?

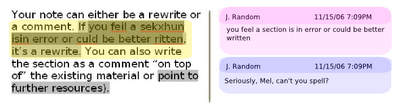

Blue Sky the Second: Peer Review (interface for simultaneous commentary on a paper)

Then there's the problem of commenting on other people's papers. Ben Salinas pointed out something to Matt Ritter and myself one afternoon: tools like Google Documents and Gobby do a great job of letting you edit simultaneously, but what happens if I have a paper draft and want to let a lot of people make comments on it? I can ask them to write comments on the paper as a whole, in which case their comments can't be tagged to specific locations in the document.

This isn't a big deal when you're asking about a 1-paragraph email; it's a problem when you're writing a 300-page thesis. I can also ask them to use Track Changes and Notes in Microsoft Word, but let's face it; that's a less than perfect solution. Aside from the red lines swarming all over the place, it's not really collaborative, and your poor commenters will end up with a dozen different versions in their inbox which they'll have to merge.

The above image is a quick attempt at drafting an interface solution. It's pretty simple - all it is is attaching comments to specific locations on a paper. In order to comment on (blue) or suggest a rewrite of (red) one area of a document, you just highlight that area (no matter how big or small it is) and type. Your comment will be "pinned" to that location, and the section of the document you just wrote about will be highlighted subtly in order to show that it's been commented on. Each successive comment on the same section darkens the highlight. You end up with a commentary heatmap of sorts - heavy highlighting indicates the parts a lot of people are talking about. There are no "threaded discussions"; if you want to comment on someone else's comment, highlight the same part as they did and post a reply.

Here's the catch; these comments aren't usually all displayed, because there might be thousands on a very long paper. In order to view the comments on a section, you highlight that section. In this image, some of the text has been highlighted (yellow) and the two comments applied to that area are displayed on the right. If you want to see all the comments, select the whole document. If you just want to see comments on one chapter or paragraph, highlight those. You could potentially sort & search comments on the right side by author/date/subject/tag/whatever, but plenty of interfaces for that have already been developed and it wouldn't be a hard add-in, as far as I can tell.

Yeah. As I said, it's a pretty lousy demo and a poorly done image, but hopefully some of the ideas squeak across despite my hackish graphics skills. Thoughts? Worth building, not worth building? More importantly, how the heck do you go about making something like this? I come from a programming background of mostly control/simulation software, and should probably pick up Ruby on Rails and Ajax and such at some point; I'm not sure what tools this kind of thing would typically be built in.

Tuesday, November 14, 2006

The Prodigy Point

Years later, the things that caused you the most cognitive friction are the ones you're most likely to remember.

This reminded me of something Mark Penner and I once talked about several years back. I call it the Prodigy Point for lack of a better term. It's the point at which something you were originally "naturally good at" turns very, very hard. For instance, I've reached such a point in my drawing; up 'till this semester I'd tinkered happily along and learned how to turn out pretty decent stuff with no formal training. I was "naturally good" at art. I didn't need to think about it or work on it. It just happened to be that way.

Then this semester, BAM. Prof. Donis-Keller made me realize that what I'd really hit was a plateau of stagnation, and that to get any better I wouldn't be able to rely on my old "it just comes naturally!" habit any longer - for the first time, I'd have to struggle and study like everyone else - perhaps even harder than everyone else, since I wasn't used to struggling with drawing - and I was faced with the Prodigy Point (not that I was an art prodigy by any stretch of the imagination, but y'know). Do you keep zooming along on the plateau, or do you swallow your pride, set to work, and have a chance at becoming truly great at what you do, a greatness based on real skill and hard work, and not just talent and dumb luck?

I've hit that point with math, and have chosen to plateau on it for now (until winter break, when Rudin and I will meet again and I'll wrestle through Analysis). I've hit that point with music, and have been in a plateau for... I'm ashamed to say it, but almost 7 years. It's really cute when a toddler can pick out melodies on the keyboard by herself, but when the kid turns 20 and isn't much better, it's not cute any more. Man, it's hard to break out of that plateau - especially when your first "this is easy!" plateau might be at a way higher level than most people will ever reach through years of struggling. It's easy to rest on the laurels of arrogance. I definitely do it a lot.

You can hit the Prodigy Point with engineering, when you realize that messing with power tools in the school garage was awesome but you're going to have to hit the books and learn DiffEq to build something on the next level. You can hit it with programming, when you realize you've been a clever little hacker but can't wrap your mind around hardcore CS yet (learning Scheme was a major turning point for me in this). One of the things places like Olin and IMSA are good at is making a lot of folks - not all, but most - hit their Prodigy Points. Hard and repeatedly enough that you can't ignore their existence.

Do you love what you do because you honestly love it, enough to sweat and cry over it? Or do you love it because it's easy for you to be good at it and you enjoy coasting on the compliments? It's a turning point where something that was formerly easy becomes hard, and the way you deal with the Prodigy Point tells you a lot about who you are and what's really important to you.

Sunday, November 12, 2006

PBL doesn't work?

Why Minimal Guidance During Instruction Does Not Work: An Analysis of the Failure of Constructivist, Discovery, Problem-Based, Experiential, and Inquiry-Based Teaching by Kirschner, Sweller, and Clark

The abstract:

"Evidence for the superiority of guided instruction is explained in the context of our knowledge of human cognitive architecture, expert-novice differences, and cognitive load. While unguided or minimally-guided instructional approaches are very popular and intuitively appealing, the point is made that these approaches ignore both the structures that constitute human cognitive architecture and evidence from empirical studies over the past half century that consistently indicate that minimally-guided instruction is less effective and less efficient than instructional approaches that place a strong emphasis on guidance of the student learning process. The advantage of guidance begins to recede only when learners have sufficiently high prior knowledge to provide ‘internal’ guidance. Recent developments in instructional research and instructional design models that support guidance during instruction are briefly described."In short, this guy is saying that Olin's "squirt-squirt"/"throw-'em-in-the-deep-end" method of education is not effective because it "does not fit human cognitive architecture."

Among other things, it says novices "in the deep end" are unable to build a cognitive map of the information, so much of the learning is lost the first time around ("spiral learning") which is often the /only/ time around. Worked examples or process sheets (which guide you step by step through solving the problem yourself) are more effective, according to the studies they cite. It also presents the problem of "lack of clarity about the difference between learning a discipline and research (or work) in the discipline," which is the same as the gap between the engineering classroom and the engineering workplace we've been talking about at Olin.

It concludes with the question of why on earth are we using PBL if it's not effective. I agree that before you can do PBL, you need to have knowledge of where to find knowledge (which is not the same thing as prior knowledge). I also agree that you tend to perform "directed" tasks more efficiently, which is not the same as deep learning of that particular subject. Years later, the things that caused you the most cognitive friction are the ones you are most likely to remember.

I'm not sure how to design a study to properly respond to this, or whether one has been done - it's adding to my frustration at being essentially an illiterate in the educational field, and to my desire to fix this somehow (grad school?).

Update: Sam, Chris, and DJ all pointed out that the link to the paper was broken. It's been fixed - thanks, guys!

Hurtling towards the void

Anyhow, after a long practice we were walking out into the quiet, humid night, and we started talking. Even though it's November, graduation's rushing up on us. It's like hurtling towards a void. And when we reach it in 6 months, this place isn't not ours any more, and we're not its students and we're not each other's (even though we'll always be Oliners and have a strong connection). It's making a lot of us cling more tightly to one another at the same time as we're hungrily drifting apart. It's a phenomena that gets repeated every year with every graduating class, but it still hits hard when it's you in there.

I feel home, but I know it's a home I can't stay in much longer. It's not something I'm going to dwell on overmuch; there's plenty to do and a lot to appreciate while things lasts, and a lot of possibilities to move forward into. Still, it seems like you never really realize what you've got until you realize you're going to lose it someday. And that moment is an odd one, when it comes.

Friday, November 10, 2006

Overloaders Anonymous

I thought the point was to cram as much knowledge and experience into my tiny little brain as possible. Going outside and lounging with friends? Feh. Must stuff physics into brain. Enjoying a leisurely dinner with my aunt? No way. Coding was so much more productive. The more things I could do, the better off I'd be in terms of what I could contribute to society, and so forth.

Nope.

There's a certain thin, clear sweetness to the rhythm of a grueling night of work. There is something to be said for singleminded devotion to a problem - or a host of problems - and a frantic period of activity, mad dashes, a mind bubbling with idea overload, knowing that you're making sacrifices because the thing you're working on is just that important. And you get a lot done, that's for sure. It makes life worth living.

But there's also a lovely part of life where you can go dancing, lie in the grass reading Milton, slowly sip some very hot, rich chicken broth, play music, hug people, and kind of... well, not do anything particular at all. It's glorious. It's productive in a different way. It makes life worth living in another way.

The first kind makes the second all the sweeter, and the second gives you space to do the first. It's like being an athlete; you need to push yourself to perform, exceed your limits, occasionally collapse gasping on the floor because you've run too fast or tried to do too many push-ups, or you won't get any better. But you need rest periods to rebuild and refresh. And I always love the feeling you get after a hard workout, after a long shower, when you finally lie down and almost glow with a light tiredness; it's satisfying and makes the rest seem much more vivid.

By doing less, I give myself the freedom to do more. I've done more intense sprints, seen more moments of gloriously quiet beauty, gotten more done, been happier, and touched more lives - as far as I can tell - ever since I started saying no. I'm still bad at it, as anyone will tell you. But I'm slowly finding my balance.

Do I miss pulling allnighters? Yeah.

Do I like getting at least 4 hours of sleep a night? Oh yes. It feels so good. I haven't fallen asleep mid-class since I stopped pulling allnighters; it makes a huge difference.

Do I wish I didn't have to make the tradeoff? Heck yeah.

Is the choice I made (sleep is important) the better one? Well, yes. For now, at least. And I'm okay with it.

I remember going to Gill's office sophomore year. This was when I was pushing 20 credits plus research and 2 jobs, committees, projects, FWOP, TAing, and pulling 1-2 allnighters a week. I was drained, bleary-eyed, dog-tired, desperate to overload even more, and agonizing about the things I'd have to turn down ("aw, Gill, if only these three classes weren't scheduled simultaneously - I'd take them all!")

Now, y'all know that Gill's got the exact same problem of wanting to do everything. But he told me that life wasn't about trying to do everything, it was making choices (one of those choices being the choice to try to do everything). And that if you had two options, and decided one was better, it didn't mean the other one wasn't good also. You make a choice, you go with that choice, and maybe you wish you could have done the second as well, but you know you made that first choice for a reason, and you accept that and enjoy the things you are doing instead of fretting about all the things you aren't.

Easier said than done, especially now that my world of opportunities has exploded; when you spend the first umpteen years of your life butting up against a ceiling of possibility and desperately looking for people to talk to and things to do, and all of a sudden

that ceiling vanishes and possibilities appear, it takes a lot of self-control to not grab 'em all at once; it's like offering an everlasting buffet to a man who's been living in famine for 15 years. But if you eat too much, you'll get just as sick.